Clinical trials generate increasingly large, diverse, and continuous data streams. Traditional EDC setups rely heavily on manual validation and reactive query management, causing delays, higher monitoring costs, and site frustration.

Advanced EDC architectures reverse this paradigm by embedding intelligence into data flow — preventing issues before they become queries.

The Root of the Problem: Why Query Burden Expands With Scale

Query spikes aren’t a symptom of investigator negligence — they’re a structural result of outdated systems.

As trials expand in complexity (adaptive designs, decentralized models, device data, ePRO, labs), manual validation teams are overwhelmed. Common drivers include:

- Inflexible form design requiring post-facto corrections

- Slow data synchronization between systems

- Delayed lab/ePRO imports causing cascading discrepancies

- Lack of automated edit checks for dynamic study rules

- Overuse of manual monitoring for protocol enforcement

The result: a growing backlog of queries that slows SDV/SDR, pushes DBL deadlines, and strains site relationships.

Architectural Shift 1: Metadata-Driven Form Design

Metadata-configured data structures enable quality at the point of entry rather than during review.

Instead of hard-coding forms for every trial, modern EDC platforms use metadata frameworks to:

- Standardize common field libraries (CDASH-aligned)

- Auto-apply edit checks to reused form elements

- Reduce human error during configuration

- Shorten UAT cycles by reusing validated components

Impact: Fewer form-design inconsistencies → fewer data discrepancies → fewer manual queries.

Architectural Shift 2: Intelligent Rule & Edit-Check Engines

Validation engines now move beyond simple range and format checks.

Advanced rule engines:

- Trigger dynamic edit checks based on subject progression & protocol logic

- Enforce visit windows and dosing rules automatically

- Drive real-time cross-form & cross-variable reconciliation

- Adapt mid-trial without downtime (for amendments)

Outcome: Data inconsistencies are resolved instantly while the site is still entering data — reducing back-and-forth communication later.

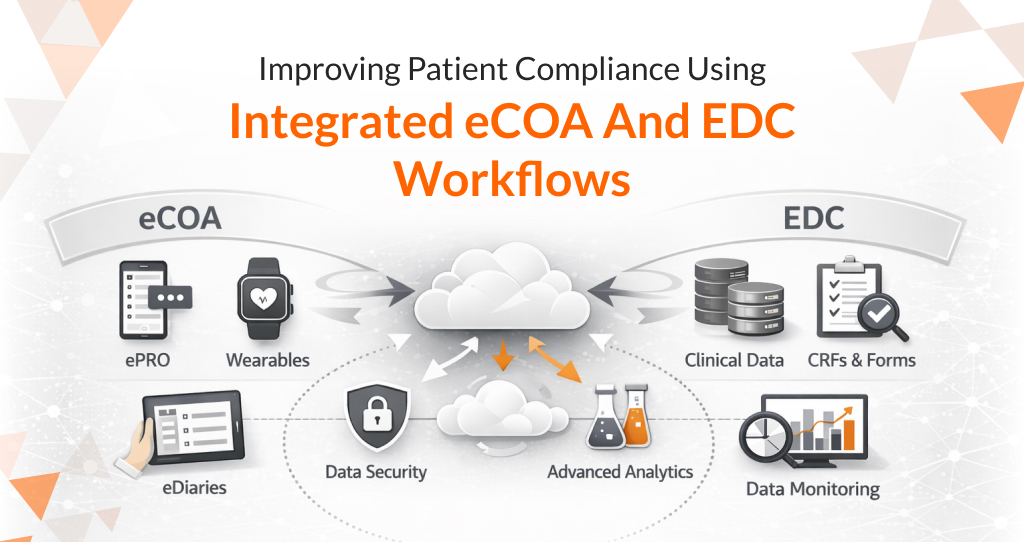

Architectural Shift 3: API-First Integration With External Data Sources

Query volume spikes when external data arrives late or mismatches EDC entries. Architectural upgrades eliminate this lag.

API-enabled EDC systems support automated imports and near-real-time reconciliation for:

- Central labs

- eCOA/ePRO

- Wearables and RMTs

- Imaging

- Pharmacokinetics data

- Safety systems (e.g., SAE reporting)

Processing rules perform:

- Auto-mapping

- Unit harmonization

- Duplicate prevention

- Lab-range harmonization by site/geography

Result: What previously required hundreds of manual queries is converted into automated validation and alignment.

Architectural Shift 4: Central Quality Dashboards & Statistical Monitoring

Data managers don’t need a full export to find issues — the system surfaces anomalies itself.

Modern EDCs incorporate:

- Statistical checks on outlier patterns

- Forms/visits completion risk heatmaps

- Site performance indicators for late or high-query sites

- Auto-flagging of unexpected trends

This enables proactive intervention instead of reactive validation — often preventing bad data before it enters the system.

Architectural Shift 5: Embedded Protocol Logic & Workflow Automation

The EDC itself now enforces protocol behavior — reducing operational error and manual oversight.

Examples:

- Auto-assignment of follow-up forms based on AE grade

- Auto-schedule of unscheduled visits after specific triggers

- Auto-notifications for missing or overdue CRFs

- Auto-dependent field behavior based on prior submissions

Impact: Site users encounter fewer opportunities to enter

Why Query Reduction Matters Beyond Efficiency

Reducing query burden generates value beyond speed:

- Higher site satisfaction → faster enrollment and better compliance

- More predictable study timelines → smoother regulatory submissions

- Lower monitoring overhead → frees budget for scientific priorities

- Cleaner interim data → stronger decisions for adaptive designs

Less query processing is not just a productivity win — it directly improves research outcomes.

Conclusion: Data Quality at Scale Requires Smarter Systems, Not More People

As trials become more decentralized, data-rich, and adaptive, manual review won’t scale. The EDC must do more than store data — it must actively protect data integrity throughout the trial lifecycle.

Advanced EDC architectures achieve this by:

- Validating data at the point of origin

- Automatically reconciling external feeds

- Surfacing anomalies statistically rather than manually

- Enforcing protocol constraints inside the system

The future of data quality is proactive, automated, and architectural — not reactive and operational.

About Octalsoft

Octalsoft delivers a unified, cloud-based clinical data platform designed to simplify trial execution and accelerate database lock. Its next-generation EDC architecture enables real-time validation, metadata-driven form design, automated reconciliation of external data sources, and centralized monitoring dashboards — dramatically reducing query burden and site workload. With seamless integrations across CTMS, IWRS, ePRO, eTMF and Safety, Octalsoft’s EDC ensures cleaner data, faster decision-making, and consistent inspection-readiness for sponsors and CROs of all sizes.